IShot: Local Descriptor for Robust Place Recognition using LiDAR Intensity

Place recognition is a challenging problem in mobile robotics, especially in unstructured environments or under viewpoint and illumination changes.

Most LiDAR-based methods rely on geometrical features to overcome such challenges, as generally scene geometry is invariant to these changes, but tend to affect camera-based solutions significantly. Compared to cameras, however, LiDARs lack the strong and descriptive appearance information that imaging can provide.

To combine the benefits of geometry and appearance, we propose coupling the conventional geometric information from the LiDAR with its calibrated intensity return.

This strategy extracts extremely useful information in the form of a new descriptor design, coined ISHOT, outperforming popular state-of art geometric-only descriptors by significant margin in our local descriptor evaluation.

To complete the framework, we furthermore develop a probabilistic keypoint voting place recognition algorithm, leveraging the new descriptor and yielding sublinear place recognition performance. The efficacy of our approach is validated in challenging global localization experiments in large-scale built-up and unstructured environments.

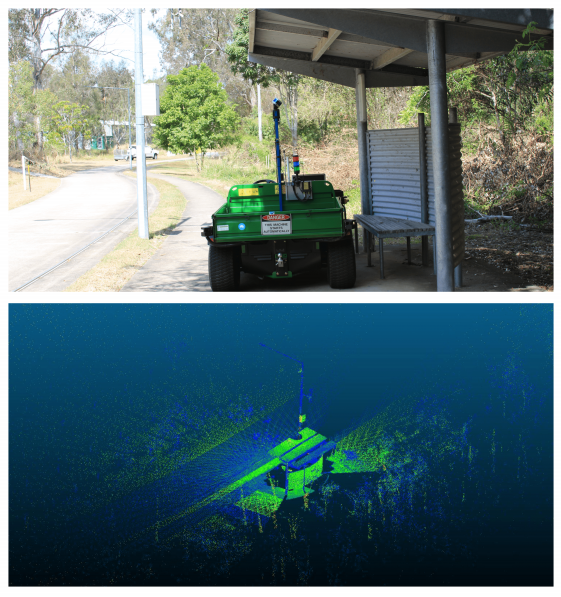

Fig. 1: A challenging scenario (upper) from our occlusion dataset. The view from the LiDAR (mounted on top of the central pole) is heavily occluded by the nearby structure. With our proposed algorithm, the vehicle is able to recover its position in the global map using only the local LiDAR point cloud (lower image, overhead view, coloured by intensity) without prior sensor or motion information (e.g.,GPS, IMU, etc).

Guo, Jiadong & Borges, Paulo & Park, Chanoh & Gawel, Abel. (2019). Local Descriptor for Robust Place Recognition Using LiDAR Intensity. IEEE Robotics and Automation Letters. PP. 1-1. 10.1109/LRA.2019.2893887.

To learn more, contact us.

[jetpack_subscription_form title=”Subscribe to our News via Email” subscribe_text=”Enter your email address to subscribe and receive notifications of new posts by email.”]