Paper: Skeleton Driven Non-Rigid Motion Tracking and 3D Reconstruction

Skeleton Driven Non-Rigid Motion Tracking and 3D Reconstruction

This paper presents a method which can track and 3D reconstruct the non-rigid surface motion of human performance using a RGB-D camera.

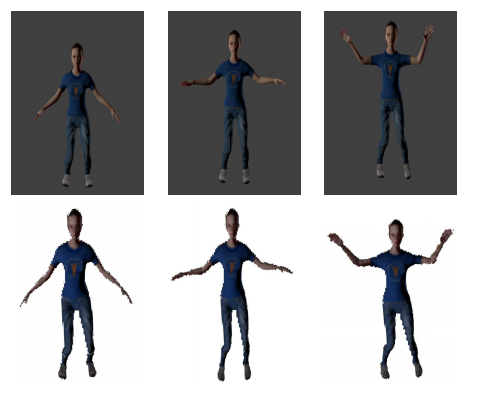

Fig. 1: Qualitative results of live reconstruction from ‘Exercise’ data sequence in our synthetic dataset. The upper row corresponds to images of different frame indices and the lower row shows respective 3D reconstructions.

3D reconstruction of marker-less human performance is a challenging problem due to the large range of articulated motions and considerable non-rigid deformations.

Current approaches use local optimization for tracking. These methods need many iterations to converge and may get stuck in local minima during sudden articulated movements.

We propose a puppet model-based tracking approach using skeleton prior, which provides a better initialization for tracking articulated movements. Dr. Peyman Moghadam (Primary Investigator) Said.

The proposed approach uses an aligned puppet model to estimate correct correspondences for human performance capture. We also contribute a synthetic dataset which provides ground truth locations for frame-by-frame geometry and skeleton joints of human subjects.

Experimental results show that our approach is more robust when faced with sudden articulated motions, and provides better 3D reconstruction compared to the existing state-of-the-art approaches.

Elanattil, Shafeeq; Moghadam, Peyman; Denman, Simon; Sridharan, Sridha; Fookes, Clinton. Skeleton Driven Non-rigid Motion Tracking and 3D Reconstruction. In: The International Conference on Digital Image Computing: Techniques and Applications (DICTA); 10-13 December; Canberra, Australia. IEEE; 2018. 1-8. 2018-12-10 | Publication type: Conference Material | DOI: https://doi.org/10.1109/DICTA.2018.8615797

Elanattil, Shafeeq; Moghadam, Peyman (2019): Synthetic Human Model Dataset for Skeleton Driven Non-rigid Motion Tracking and 3D Reconstruction. v1. CSIRO. Data Collection. https://doi.org/10.25919/5c495488b0f4e

Download the full paper here.

For more information, contact Dr Peyman Moghadam.

[jetpack_subscription_form title=”Subscribe to our News via Email” subscribe_text=”Enter your email address to subscribe and receive notifications of new posts by email.”]