Distributed & Large Scale Vision

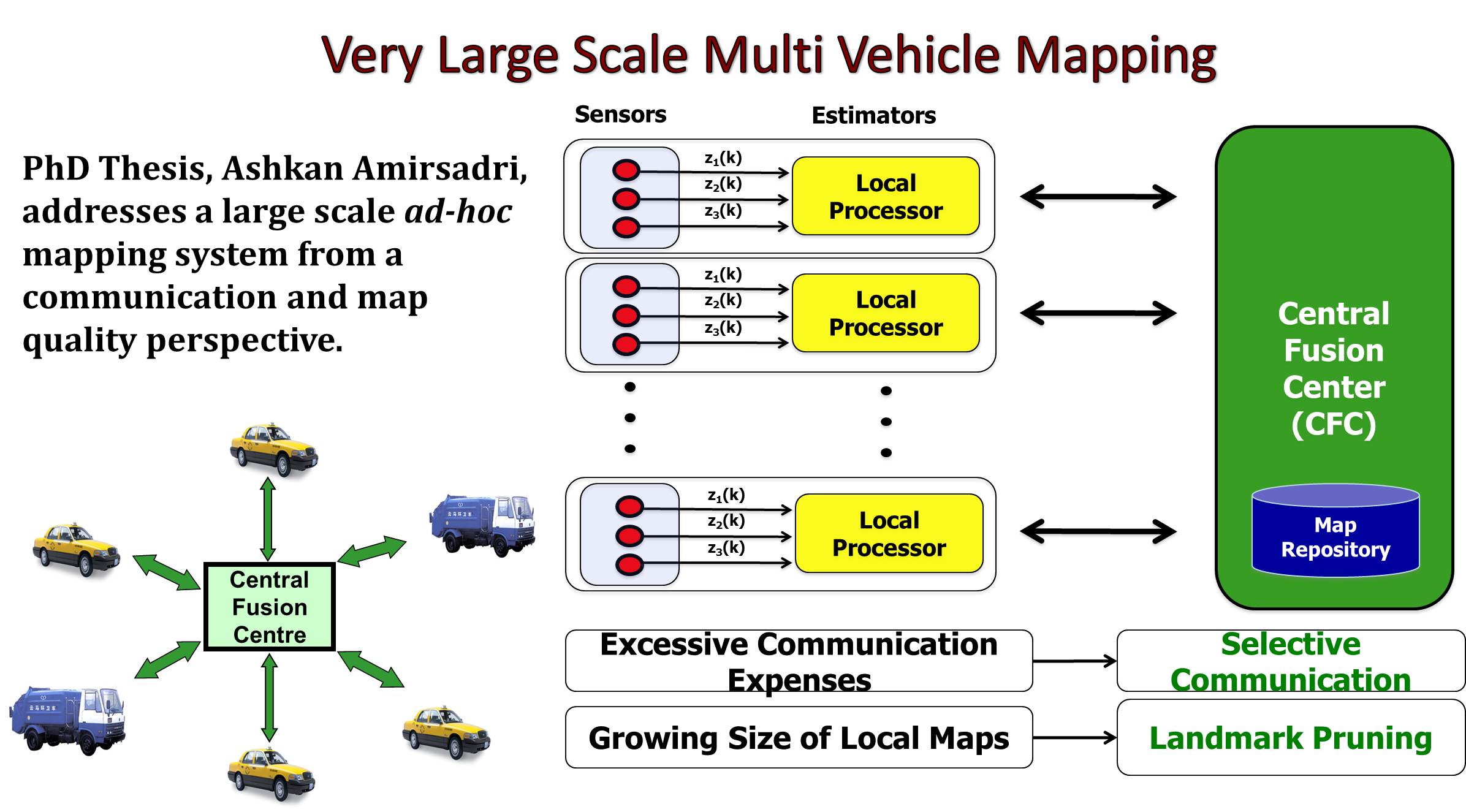

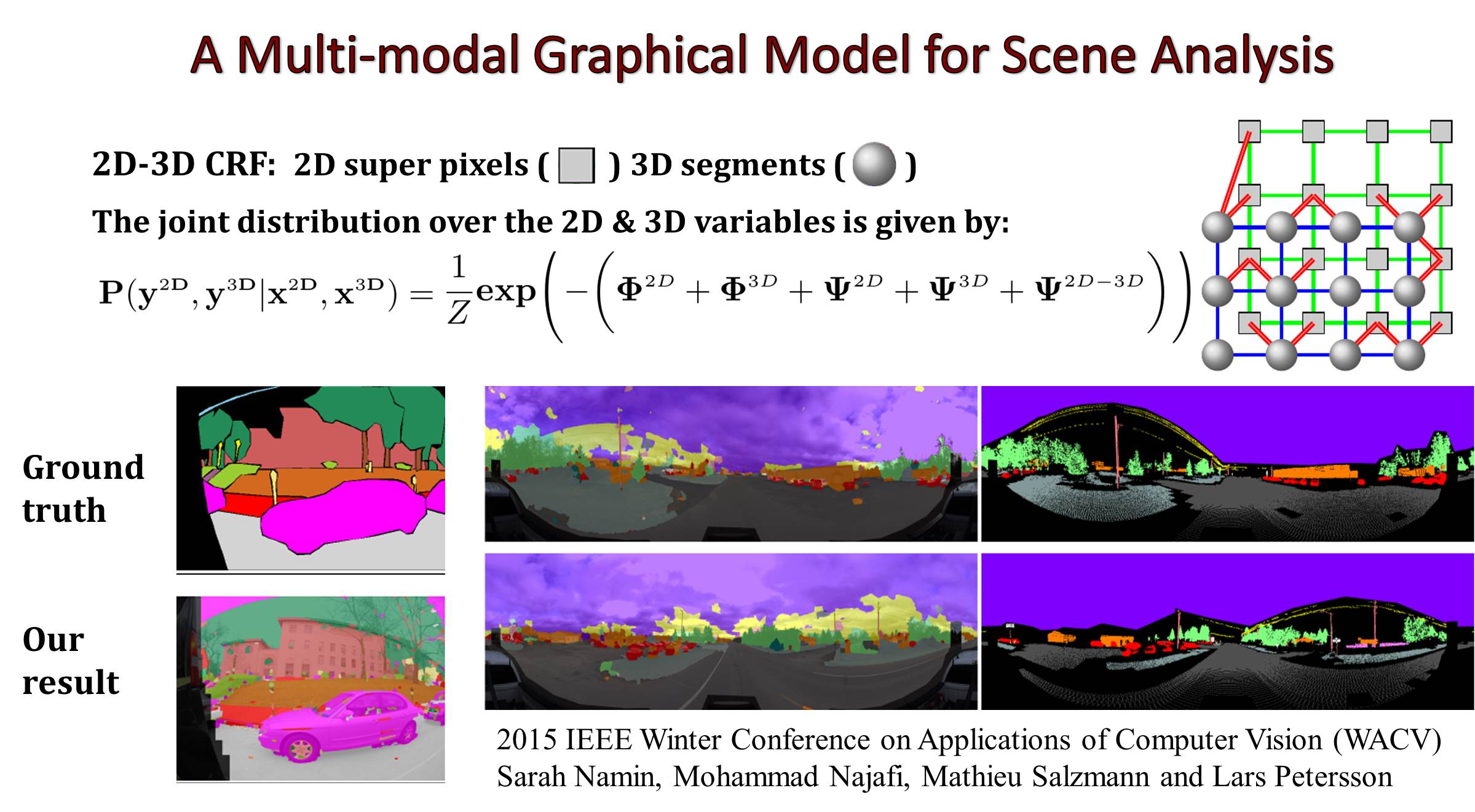

We address the fundamental questions that arise when the size of the problem becomes huge and when the task is distributed over many agents. Typically, the algorithms need to be of low computational complexity, small memory footprint and parallelizable. Specifically, we work on semantic labelling, object detection/recognition, registration and ad-hoc mapping

A range of practical computer vision applications require the ability to detect and recognise objects, label pixels with the class they belong to and to align new data with what has previously been seen. When an application is distributed, large scale in nature or is otherwise compute limited, many computer vision algorithms are simply too slow or require too large data transfers to be of any practical use. This is a hard problem to address well without sacrificing too much of the original accuracy.

The solution to many of these problems lie in finding algorithms that are of low computational complexity, have few memory accesses and are massively parallelizable while still preserving the accuracy of more complex counterparts. A key difference in our approach is to let ourselves be inspired by what operations current hardware excel at and design corresponding algorithms. By exploring complementary sensors, such as laser range scanners and multispectral cameras, the underlying problem can be simplified enabling fast and accurate solutions.