Paper: Locus: LiDAR-based Place Recognition using Spatiotemporal Higher-Order Pooling

Locus: LiDAR-based Place Recognition using Spatiotemporal Higher-Order Pooling ICRA 2021

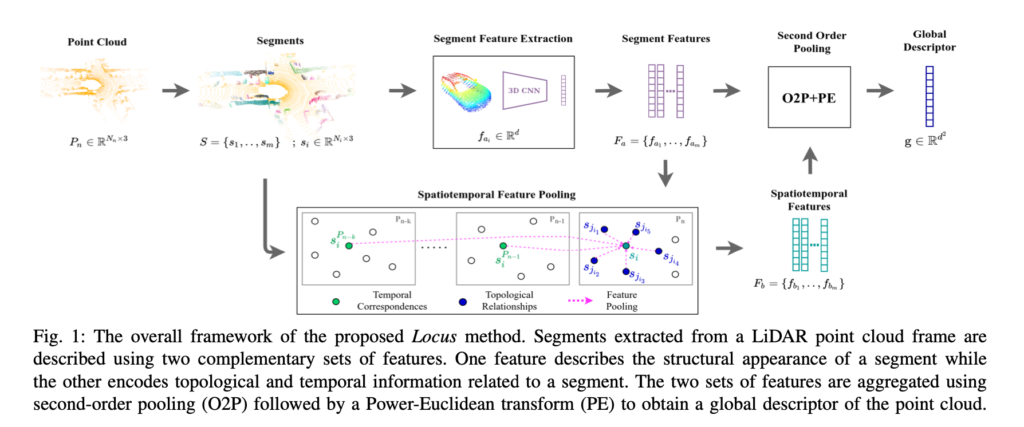

LiDAR-based Place Recognition enables the estimation of a globally consistent map and trajectory by providing non-local constraints in Simultaneous Localisation and Mapping (SLAM). This paper presents Locus, a novel place recognition method using 3D LiDAR point clouds in large-scale environments. We propose a method for extracting and encoding topological and temporal information related to components in a scene and demonstrate how the inclusion of this auxiliary information in place description leads to more robust and discriminative scene representations. Second-order pooling along with a non-linear transform is used to aggregate these multi-level features to generate a fixed-length global descriptor, which is invariant to the permutation of input features. The proposed method outperforms state-of-the-art methods on the KITTI dataset. Furthermore, Locus is demonstrated to be robust across several challenging situations such as occlusions and viewpoint changes in 3D LiDAR point clouds. The open-source implementation is available at: https://github.com/csiro-robotics/locus

Locus is a global descriptor for large-scale place recognition using sequential 3D LiDAR point clouds. It encodes topological relationships and temporal consistency of scene components to obtain a discriminative and view-point invariant scene representation.

Kavisha Vidanapathirana, Peyman Moghadam, Ben Harwood, Muming Zhao, Sridha Sridharan, and Clinton Fookes. “Locus: LiDAR-based Place Recognition using Spatiotemporal Higher-Order Pooling.” IEEE International Conference on Robotics and Automation (ICRA), 2021.

This research was conducted in collaboration between CSIRO Data61 Robotics and Autonomous Systems Group and QUT and was partially funded by CSIRO’s Machine Learning and Artificial Intelligence (MLAI) Future Science Platform.

Download the full paper here.

Code repository: https://github.com/csiro-robotics/locus

For more information, contact Dr Peyman Moghadam